Scoring and Optimization: Difference between revisions

(→Optimization of Feature Weights: links to tuning tasks) |

No edit summary |

||

| Line 3: | Line 3: | ||

|image = [[File:features.png|200px]] | |image = [[File:features.png|200px]] | ||

|label1 = Lecture video: | |label1 = Lecture video: | ||

|data1 = [http://example.com web '''TODO'''] <br/> [https://www.youtube.com/watch?v= | |data1 = [http://example.com web '''TODO'''] <br/> [https://www.youtube.com/watch?v=oxhc0Nv_ySw&index=11&list=PLpiLOsNLsfmbeH-b865BwfH15W0sat02V Youtube]}} | ||

{{#ev:youtube|https://www.youtube.com/watch?v= | {{#ev:youtube|https://www.youtube.com/watch?v=oxhc0Nv_ySw&index=11&list=PLpiLOsNLsfmbeH-b865BwfH15W0sat02V|800|center}} | ||

== Features of MT Models == | == Features of MT Models == | ||

Revision as of 09:40, 25 August 2015

| |

| Lecture video: |

web TODO Youtube |

|---|---|

Features of MT Models

Phrase Translation Probabilities

Phrase translation probabilities are calculated from occurrences of phrase pairs extracted from the parallel training data. Usually, MT systems work with the following two conditional probabilities:

These probabilities are estimated by simply counting how many times (for the first formula) we saw aligned to and how many times we saw in total. For example, based on the following excerpt from (sorted) extracted phrase pairs, we estimate that .

estimated in the programme ||| naznačena v programu estimated in the programme ||| naznačena v programu estimated in the programme ||| naznačena v programu estimated in the programme ||| odhadován v programu estimated in the programme ||| odhadovány v programu estimated in the programme ||| odhadovány v programu estimated in the programme ||| předpokládal program estimated in the programme ||| v programu uvedeným estimated in the programme ||| v programu uvedeným

Lexical Weights

Lexical weights are a method for smoothing the phrase table. Infrequent phrases have unreliable probability estimates; for instance many long phrases occur together only once in the corpus, resulting in . Several methods exist for computing lexical weights. The most common one is based on word alignment inside the phrase. The probability of each foreign word is estimated as the average of lexical translation probabilities over the English words aligned to it. Thus for the phrase with the set of alignment points , the lexical weight is:

Language Model

https://www.coursera.org/course/nlp

https://www.youtube.com/playlist?list=PLaRKlIqjjguC-20Glu7XVAXm6Bd6Gs7Qi

Word and Phrase Penalty

Distortion Penalty

Decoding

Phrase-Based Search

Decoding in SCFG

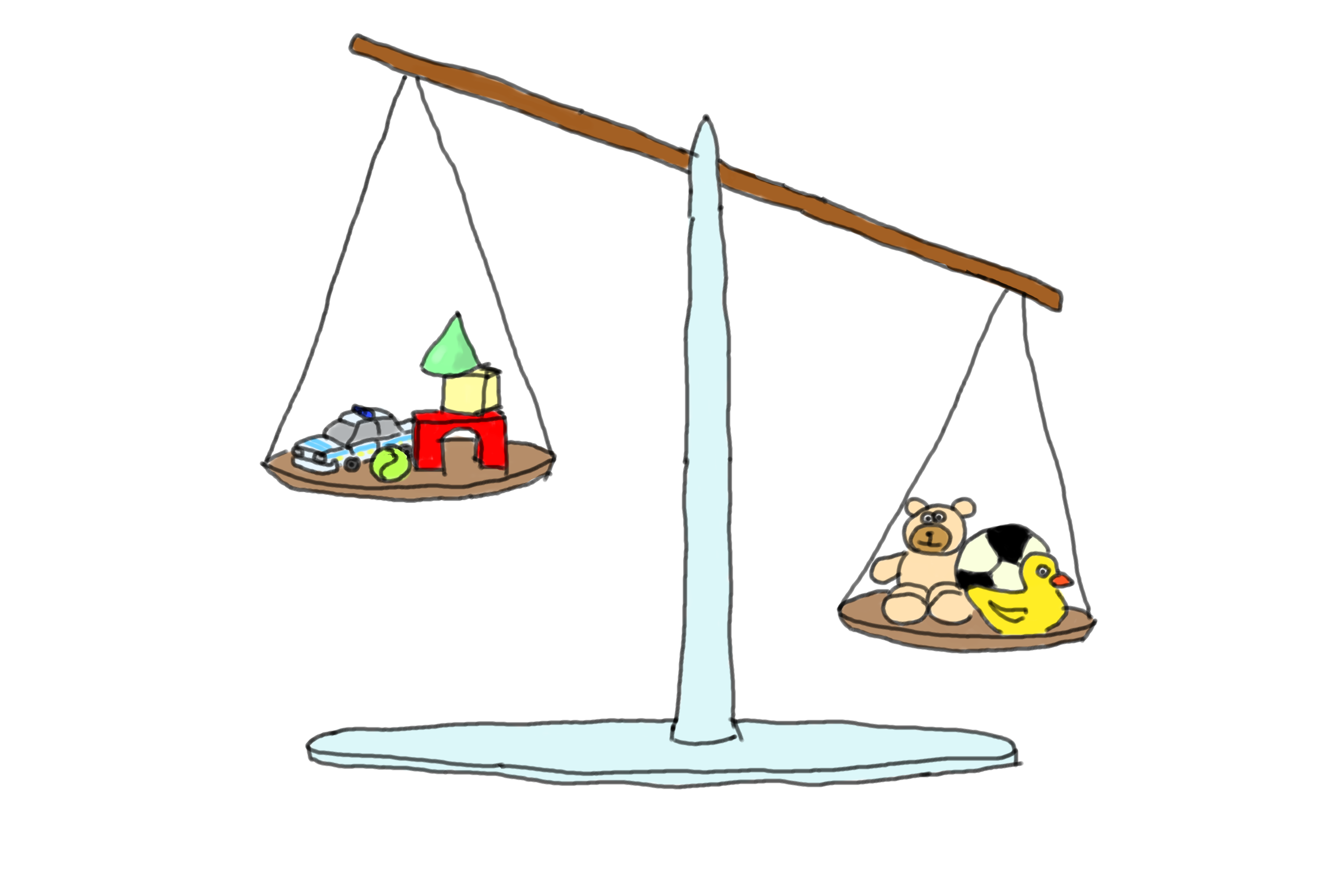

Optimization of Feature Weights

Note that there have even been shared tasks in model optimization. One, by invitation only, in 2011 and one in 2015: WMT15 Tuning Task.