Automatic MT Evaluation: Difference between revisions

Jump to navigation

Jump to search

No edit summary |

No edit summary |

||

| Line 21: | Line 21: | ||

== BLEU == | == BLEU == | ||

<ref name="bleu">Kishore Papineni, Salim Roukos, Todd Ward, Wei-Jing Zhu. ''[[BLEU: a Method for Automatic Evaluation of Machine Translation http://www.aclweb.org/anthology/P02-1040.pdf]]''</ref> | |||

== References == | == References == | ||

<references /> | <references /> | ||

Revision as of 09:07, 10 February 2015

| |

| Lecture video: |

web TODO Youtube |

|---|---|

Reference Translations

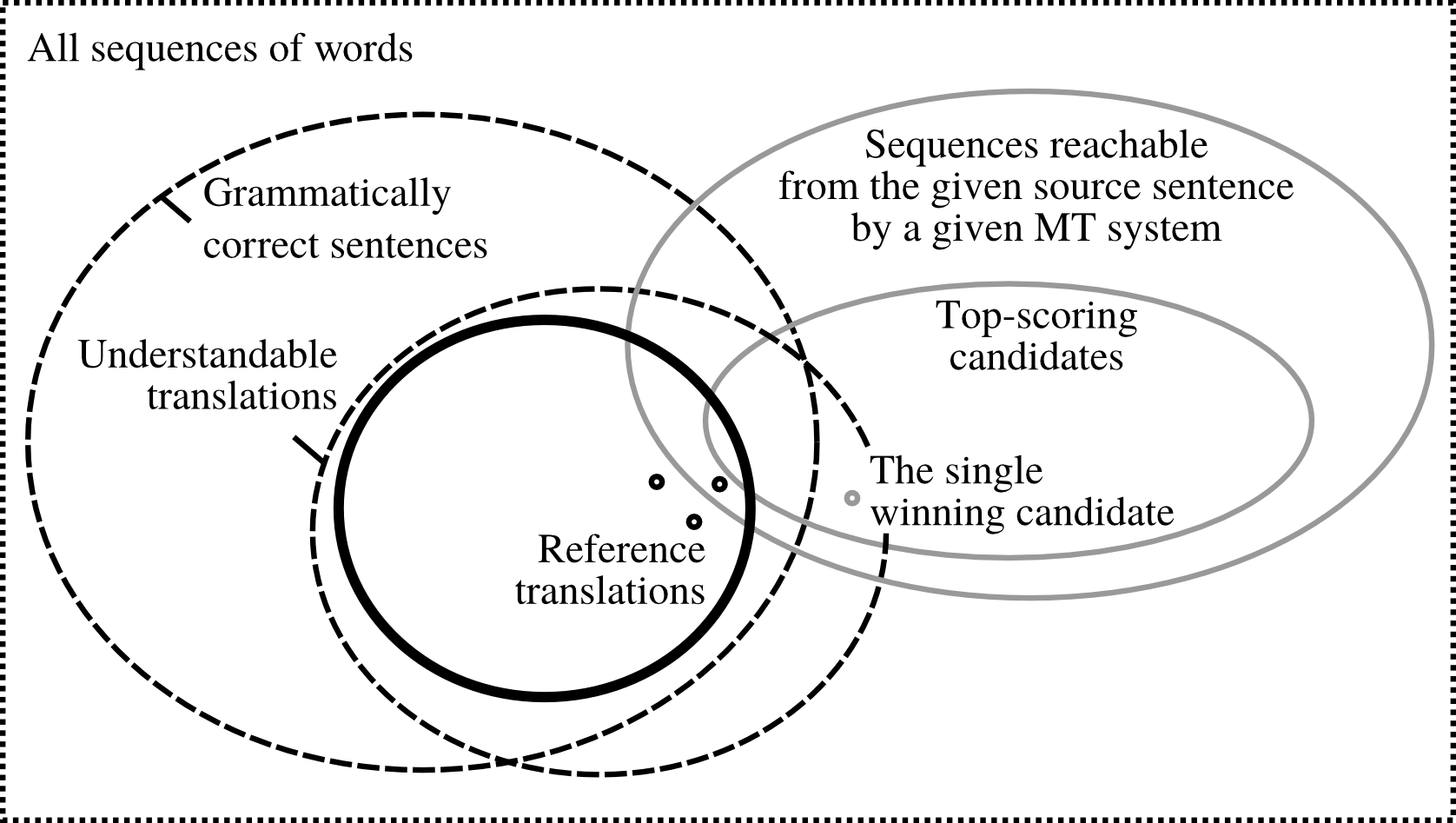

The following picture[1] illustrates the issue of reference translations:

Out of all possible sequences of words in the given language, only some are grammatically correct sentences (). An overlapping set is formed by understandable translations () of the source sentence (note that these are not necessarily grammatical). Possible reference translations can then be viewed as a subset of . Only some of these can be reached by the MT system. Typically, we only have several reference translations at our disposal; often we have just a single reference.

PER

Position-independent error rate[2] (PER) is a simple measure which counts the number of words which are identical in the MT output and the reference translation and divides

BLEU

References

- ↑ Ondřej Bojar, Matouš Macháček, Aleš Tamchyna, Daniel Zeman. Scratching the Surface of Possible Translations

- ↑ C. Tillmann, S. Vogel, H. Ney, A. Zubiaga, H. Sawaf. Accelerated DP Based Search for Statistical Translation

- ↑ Kishore Papineni, Salim Roukos, Todd Ward, Wei-Jing Zhu. BLEU: a Method for Automatic Evaluation of Machine Translation http://www.aclweb.org/anthology/P02-1040.pdf