Word Alignment

| |

| Lecture video: |

web TODO Youtube |

|---|---|

| Exercises: | IBM-1 Alignment Model |

{{#ev:youtube|https://www.youtube.com/watch?v=mqyMDLu5JPw%7C800%7Ccenter}}

In the previous lecture, we saw how to find sentences in parallel data which correspond to each other. Now we move one step further and look for words which correspond to each other in our parallel sentences.

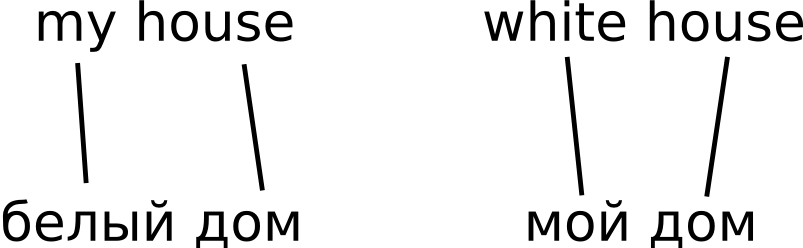

This task is usually called word alignment. Note that once we have solved it, we get a (probabilistic) translation dictionary for free. See our sample "parallel corpus":

For example, if we look for translations of the Russian word "дом", we simply collect all its alignment links (lines leading from the word "дом") in our data and estimate translation probabilities from them. In our tiny example, we get P("house"|"дом") = 2/2 = 1.

IBM Model 1

So-called IBM models[1] are the best-known and still the most commonly used approach to word alignment.

In our lecture, we only go through the first, simplest model.

Model Limitations

IBM Model 1 only looks at lexical translation probability. It has no notion of word position so it is happy to align two neighboring words in the source sentence to two completely different positions in the target. It also disregards how many alignment links lead to a particular word (it does not model the so-called word fertility), so in some cases, it can even align the whole source sentence to a single target-side word, leaving other target words unaligned. Higher IBM models address these limitations with probability estimates of distortion and fertility.

Exercises

References

- ↑ Peter F. Brown, Stephen A. Della Pietra, Vincent J. Della Pietra, Robert L. Mercer The Mathematics of Statistical Machine Translation: Parameter Estimation