Word Alignment

| |

| Lecture video: |

web TODO Youtube |

|---|---|

| Exercises: | IBM-1 Alignment Model |

{{#ev:youtube|https://www.youtube.com/watch?v=mqyMDLu5JPw%7C800%7Ccenter}}

In the previous lecture, we saw how to find sentences in parallel data which correspond to each other. Now we move one step further and look for words which correspond to each other in our parallel sentences.

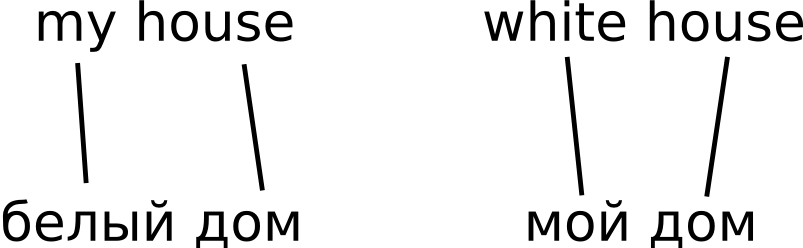

This task is usually called word alignment. Note that once we have solved it, we get a (probabilistic) translation dictionary for free. See our sample "parallel corpus":

For example, if we look for translations of the Russian word "дом", we simply collect all alignment links in our data and estimate translation probabilities from them. In our tiny example, we get:

Failed to parse (syntax error): {\displaystyle P(\text{"house"}|\text{"dom"}) = \frac{2}{2} = 1}

Exercises

References

- ↑ Peter F. Brown, Stephen A. Della Pietra, Vincent J. Della Pietra, Robert L. Mercer The Mathematics of Statistical Machine Translation: Parameter Estimation