MT Evaluation in General: Difference between revisions

No edit summary |

|||

| Line 49: | Line 49: | ||

=== Absolute Rating === | === Absolute Rating === | ||

We put each translation into a category that best describes its quality. The following categories can be used: | |||

Adequacy | {| | ||

| '''Worth publishing''' | |||

| Translation is almost perfect, can be published as-is. | |||

|- | |||

| '''Worth editing''' | |||

| Translation contains minor errors which can be quickly fixed by a human post-editor. | |||

|- | |||

| '''Worth reading''' | |||

| Translation contains major errors but can be used for rough understanding of the text (''gisting''). | |||

|} | |||

If we define our categories like this, probably all example translations fall in the ''worth reading'' bin. | |||

We can also separate our assessment of translation quality into different aspects (or dimensions). One division that has been used extensively for MT evaluation is: | |||

* '''Adequacy''' -- how faithfully does the translation capture the meaning of the source sentence | |||

* '''Fluency''' -- is the translation a grammatical, fluent sentence in the target language? (regardless of meaning) | |||

In this case... | |||

=== Relative Rating === | === Relative Rating === | ||

Revision as of 14:50, 26 January 2015

| |

| Lecture video: |

web TODO Youtube |

|---|---|

{{#ev:youtube|_QL-BUxIIhU|800|center}}

Data Splits

Available training data is usually split into several parts, e.g. training, development (held-out) and (dev-)test. Training data is used to estimate model parameters, development set can be used for model selection, hyperparameter tuning etc. and dev-test is used for continuous evaluation of progress (are we doing better than before?).

However, you should always keep an additional (final) test set which is used only very rarely. Evaluating your system on the final test set can then be used as a rough estimate of its true performance because you do not use it in the development process at all, and therefore do not bias your system towards it.

The "golden rule" of (MT) evaluation: Evaluate on unseen data!

Approaches to Evaluation

Let us first introduce the example that we will use throughout the section:

Example Sentence + Translations

Original German sentence:

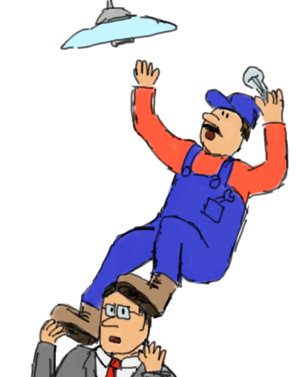

- Arbeiter sturzte von Leiter: schwer verletzt

English reference translation:

- Worker falls from ladder: seriously injured

| MT Output | Notes |

|---|---|

| 1 Workers rushed from director: Seriously injured | plural (workers), bad choice of verb (rushed), Leiter mistranslated as director |

| 2 Workers fell from ladder: hurt | plural (workers), intensifier missing |

| 3 Worker rushed from ladder: schwer verletzt | bad choice of verb (rushed), tail is left untranslated |

| 4 Worker fell from leader: heavily injures | Leiter translated as leader (not a typo, a bad lexical choice), poor morphological choices |

Absolute Rating

We put each translation into a category that best describes its quality. The following categories can be used:

| Worth publishing | Translation is almost perfect, can be published as-is. |

| Worth editing | Translation contains minor errors which can be quickly fixed by a human post-editor. |

| Worth reading | Translation contains major errors but can be used for rough understanding of the text (gisting). |

If we define our categories like this, probably all example translations fall in the worth reading bin.

We can also separate our assessment of translation quality into different aspects (or dimensions). One division that has been used extensively for MT evaluation is:

- Adequacy -- how faithfully does the translation capture the meaning of the source sentence

- Fluency -- is the translation a grammatical, fluent sentence in the target language? (regardless of meaning)

In this case...