Automatic MT Evaluation

| |

| Lecture video: |

web TODO Youtube |

|---|---|

| Supplementary materials: | File:Bleu.pdf |

| Exercises: |

BLEU PER |

{{#ev:youtube|https://www.youtube.com/watch?v=Bj_Hxi91GUM&index=5&list=PLpiLOsNLsfmbeH-b865BwfH15W0sat02V%7C800%7Ccenter}}

Reference Translations

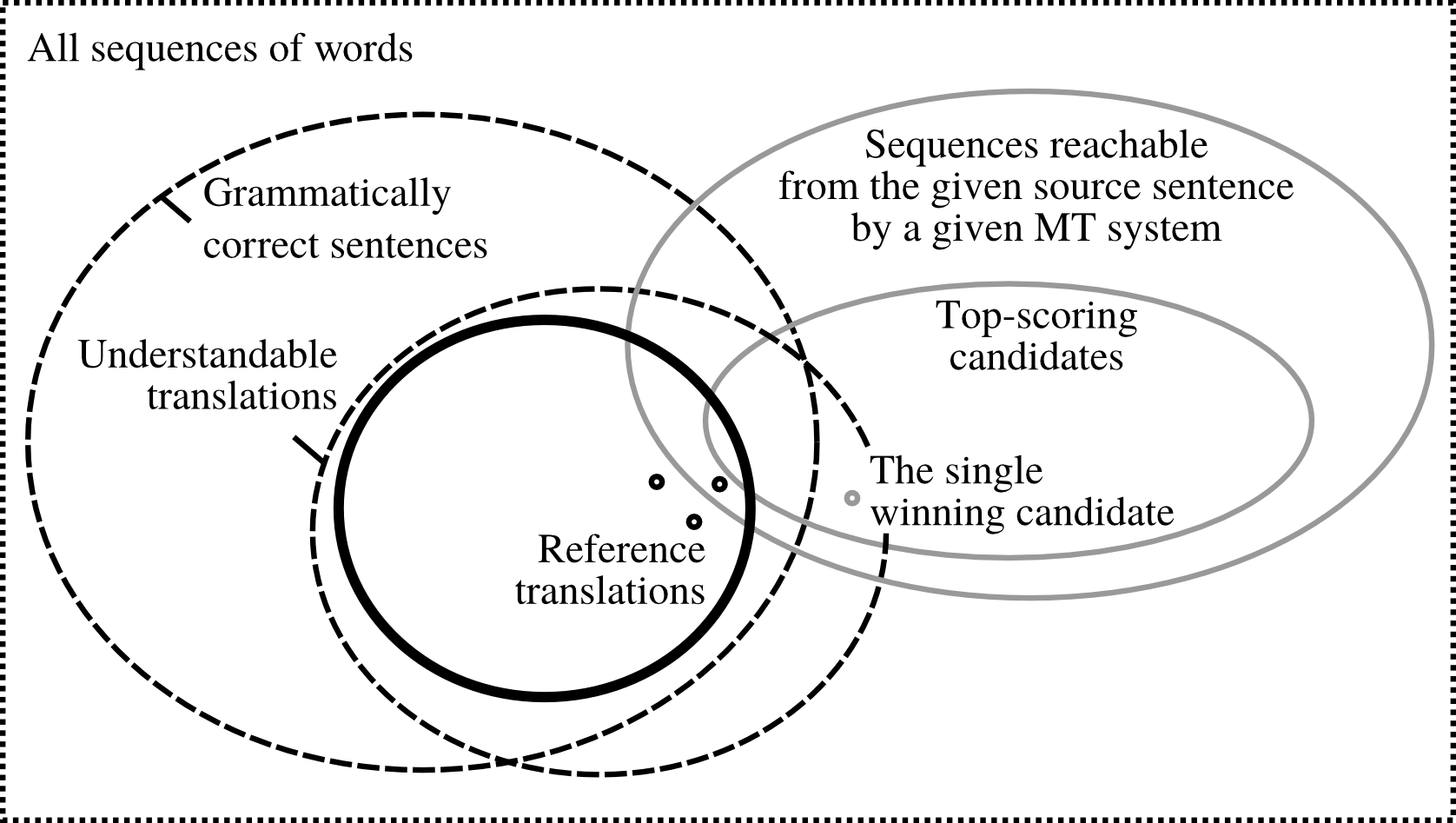

The following picture[1] illustrates the issue of reference translations:

Out of all possible sequences of words in the given language, only some are grammatically correct sentences (). An overlapping set is formed by understandable translations () of the source sentence (note that these are not necessarily grammatical). Possible reference translations can then be viewed as a subset of . Only some of these can be reached by the MT system. Typically, we only have several reference translations at our disposal; often we have just a single reference.

PER

Position-independent error rate[2] (PER) is a simple measure which counts the number of correct words in the MT output, regardless of their position. It is calculated using the following formula:

Where and is the number of tokens in the reference translation and candidate translation, respectively.

BLEU

BLEU[3] (Bilingual evaluation understudy) remains the most popular metric for automatic evaluation of MT output quality.

While PER only looks at individual words, BLEU considers also sequences of words. Informally, we can describe BLEU as the amount of overlap of -grams between the candidate translation and the reference (more specifically unigrams, bigrams, trigrams and 4-grams, in the standard formulation).

The formal definition is as follows:

Where (almost always) and . stand for -gram precision, i.e. the number of -grams in the candidate translation which are confirmed by the reference.

Each reference n-gram can be used to confirm the candidate n-gram only once (clipping), making it impossible to game BLEU by producing many occurrences of a single common word (such as "the").

BP stands for brevity penalty. Since BLEU is a kind of precision, short outputs (which only contain words that the system is sure about) would score highly without BP. This penalty is defined simply as:

Where and is again the number of tokens in the reference translation and candidate translation, respectively.

Example

Consider the following situation:

| Source | Vom Glück der traumenden Kamele | Confirmed | |||

|---|---|---|---|---|---|

| Reference | On the happiness of dreaming camels | 1 | 2 | 3 | 4 |

| MT Output | The happiness of dreaming camels | 5 | 4 | 3 | 2 |

The number of confirmed MT n-grams is 5, 4, 3, 2 respectively for unigrams, bigrams etc. The MT output is one word shorter than the reference, therefore:

The geometric mean of precisions is:

Note that you can equivalently take the fourth root of the product of the precisions, i.e.

The final BLEU score is then .

BLEU is often mutliplied by 100 for readability.

BLEU is a document-level metric. This means that counts of confirmed n-grams are collected for all sentences in the translated document and then the geometric mean of n-gram precisions is computed from the accumulated counts. For a single sentence, BLEU is often zero (since there is frequently no matching 4-gram or even trigram).

Multiple Reference Translations

BLEU supports multiple references. In that case, if an n-gram in the MT output is confirmed by any of the reference translations, it is counted as correct. If an n-gram occurs multiple times, it has to be seen in one of the references multiple times as well.

The original paper is not clear about BP in this case. The usual practice is to take the reference translation which is closest in length to the MT output and calculate BP from that. (Note that even this specification is not unambiguous since there can be two closest references to the given hypothesis, the longer and the shorter one.)

Other Metrics

- Results of the WMT14 Metrics Shared Task[4] (WMT metrics) -- an annual shared task in automatic evaluation of MT, see the task web page.

- Translation Error Rate[5] (TER) -- an edit-distance based metric on the level of phrases

- METEOR[6] -- a robust metric with support for paraphrasing

Exercises

References

- ↑ Ondřej Bojar, Matouš Macháček, Aleš Tamchyna, Daniel Zeman. Scratching the Surface of Possible Translations

- ↑ C. Tillmann, S. Vogel, H. Ney, A. Zubiaga, H. Sawaf. Accelerated DP Based Search for Statistical Translation

- ↑ Kishore Papineni, Salim Roukos, Todd Ward, Wei-Jing Zhu. BLEU: a Method for Automatic Evaluation of Machine Translation

- ↑ Matouš Macháček and Ondřej Bojar. Results of the WMT14 Metrics Shared Task

- ↑ Matthew Snover, Bonnie Dorr, Richard Schwartz, Linnea Micciulla, John Makhoul. A Study of Translation Edit Rate with Targeted Human Annotation

- ↑ Alon Lavie, Michael Denkowski. The METEOR Metric for Automatic Evaluation of Machine Translation

![{\displaystyle {\sqrt[{4}]{{\frac {5}{6}}\cdot {\frac {4}{5}}\cdot {\frac {3}{4}}\cdot {\frac {2}{3}}}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0ac157ed4e7201773b984524434ba9d0800c6b98)